PS2: I can generate a debug APK without any issue whatsoever. PS: Obviously, I am selecting my variant here during the process: In this talk, I would like to focus and highlight the ideal upgrade path and talk about upgradecheck CLI tool separation of providers registering connections types important 2. However, companies and enterprises are still facing difficulties in upgrading to 2.0. I do it via AS and follow the very standard procedure.Ĭan someone point to me what am I missing here? I assume there is a way to select a specific build variant when generating a signed APK, how does it works? Airflow 2.0 was a big milestone for the Airflow community.

Nothing changed in my way of generating an APK. Now, I have been generating an APK every week for years now, so I know my way around the folders, the different build variant output folders etc. But when I try to generate a Signed APK, I get a strange message after building telling me:ĪPK(s) generated successfully for module 'android-mobile-app-XXXX.app' with 0 build variants:Įven though the build seem to be successful I cannot find the generated APK anywhere (and considering the time it takes to give me that error, I don't even think it is building anything). This page describes the steps to install Apache Airflow custom plugins on your Amazon MWAA environment using a plugins.zip file. Now I can compile my project and launch my app on my mobile, everything is working. I just updated my Android studio to the version 2021.1.1 Canary 12.Īfter struggling to make it work, I had to also upgrade my Gradle and Gradle plugin to 7.0.2. The permanent shutdown is not until March 15th.Īs in actions/checkout issue 14, you can add as a first step:

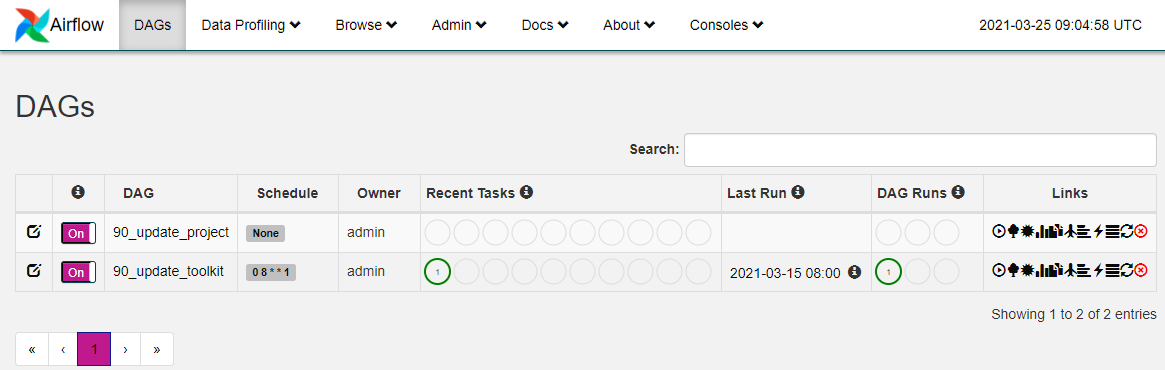

Plus, this is still only the brownout period, so the protocol will only be disabled for a short period of time, allowing developers to discover the problem. Personally, I consider it less an "issue" and more "detecting unmaintained dependencies". The entire Internet has been moving away from unauthenticated, unencrypted protocols for a decade, it's not like this is a huge surprise. Second, check your package.json dependencies for any git:// URL, as in this example, fixed in this PR. This will help clients discover any lingering use of older keys or old URLs. MWAA uses S3 as a storage layer for the code, i.e., DAG files, plugins code, and requirements.txt to install additional Python packages within the Airflow environment. AWS utilizes RDS Aurora (Postgres) for that purpose. This is the full brownout period where we’ll temporarily stop accepting the deprecated key and signature types, ciphers, and MACs, and the unencrypted Git protocol. Airflow relies on a metadata database that stores information about your workflows. See " Improving Git protocol security on GitHub". Poke_interval ( int) - Poke interval to check dag run status when wait_for_completion=True.First, this error message is indeed expected on Jan. Wait_for_completion ( bool) - Whether or not wait for dag run completion. When reset_dag_run=True and dag run exists, existing dag run will be cleared to rerun. Caution: You can only list and edit your DAG created by DAG Creation Manager. The plugin also provide other custom features for your DAG. A plugin for Apache Airflow that create and manage your DAG with web UI. When reset_dag_run=False and dag run exists, DagRunAlreadyExists will be raised. Airflow DAG Creation Manager Plugin Description.

This is useful when backfill or rerun an existing dag run. Reset_dag_run ( bool) - Whether or not clear existing dag run if already exists. Trigger_dag_id ( str) - the dag_id to trigger (templated)Ĭonf ( dict) - Configuration for the DAG runĮxecution_date ( str or datetime.datetime) - Execution date for the dag (templated) Triggers a DAG run for a specified dag_id Parameters Extensible: Easily define your own operators, executors and extend the library so that it fits the level of abstraction that suits your environment. TriggerDagRunOperator ( *, trigger_dag_id : str, conf : Optional = None, execution_date : Optional ] = None, reset_dag_run : bool = False, wait_for_completion : bool = False, poke_interval : int = 60, allowed_states : Optional = None, failed_states : Optional = None, ** kwargs ) ¶ Dynamic: Airflow pipelines are configuration as code (Python), allowing for dynamic pipeline generation.This allows for writing code that instantiates pipelines dynamically. You would want to use this command if you want to reduce the size of the metadata database. Airflow 2.3.0 introduces a new airflow db clean command that can be used to purge old data from the metadata database. Screenshots: Purge history from metadata database. Throughout 2020, various organizations and leaders within the Airflow Community collaborated closely to refine the scope of Airflow 2. In your case, has to be something like: from operators. name = Triggered DAG ¶ get_link ( self, operator, dttm ) ¶ class _dagrun. Grid view replaces tree view in Airflow 2.3.0. In Airflow 2.0 to import your plugin you just need to do it directly from the operators module. It allows users to accessĭAG triggered by task using TriggerDagRunOperator.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed